How to Build Your Own AI Assistant Using Python (A Complete Guide)

There is no denying that artificial intelligence has fundamentally changed the way we write code, tackle complex problems, and manage our daily workflows. However, relying exclusively on generic, public AI tools like ChatGPT comes with some pretty frustrating limitations. These platforms lack critical context about your specific projects, they cannot dive into your local file system, and handing over sensitive business data to a third party is often a massive security risk. So, if public platforms fall short, what is the most effective alternative for supercharging your productivity as a developer?

The ultimate solution is to build your own ai assistant using python. When you create a custom AI architecture, you reclaim total control over what your assistant can do, how much memory it retains, and where your data actually goes. Whether your goal is to design a smart agent that triggers your DevOps scripts, a tool to query proprietary company databases, or simply a highly customized internal chatbot, Python makes getting started surprisingly straightforward.

Throughout this comprehensive guide, we are going to dive into exactly why you should move away from public AI tools. We will walk through the step-by-step process of building your own assistant from scratch, explore advanced integrations like LangChain and local LLMs, and cover the industry best practices needed to keep your new tool secure and incredibly fast.

The Problem With Generic Models: Why Build Your Own AI Assistant Using Python

If you have ever tried leaning on a public AI model to help with highly specialized, niche tasks, you have probably slammed into the infamous “context limit” or dealt with frustrating hallucinations. But why do these platforms struggle so much with specific queries, and what is actually happening beneath the surface?

First and foremost, foundational AI models are trained on incredibly broad, generalized datasets. When you prompt them with a question about your unique infrastructure or a proprietary codebase, they can only rely on that generic knowledge. Because they lack continuous, long-term memory of your previous chats—and have zero access to your local HomeLab server—they simply cannot provide the highly accurate, context-aware answers you need.

Secondly, we have to talk about data privacy. Copying and pasting your proprietary source code, active API keys, or sensitive customer information into a public web application is a severe security vulnerability. Because of these inherent risks, many enterprise-level organizations have understandably placed strict bans on using public generative AI tools in the workplace.

Finally, web-based models completely lack the ability to execute real-world actions. Sure, a web UI can generate a brilliant Python script, but it cannot actually run that code on your local machine. It cannot trigger your CI/CD pipelines, and it cannot scan your local directories for errors. By building a custom AI, you bridge that gap, creating an autonomous agent that can actually “do” things rather than just “say” things.

Basic Solutions: Steps to Build Your Own AI Assistant Using Python

If you are ready to leave those limitations behind and engineer a highly tailored tool, here are the actionable steps to build a fully functional assistant from the ground up. By using standard Python programming paradigms, you can actually get a working prototype up and running in a matter of minutes.

- Set Up Your Python Environment: Always start by isolating your project workspace using a virtual environment to avoid messy dependency conflicts.

- Obtain API Credentials: Register and generate a secure API key from a leading model provider, such as OpenAI or Anthropic.

- Install Required Libraries: Utilize PIP to pull in the necessary software development kits (SDKs) and environment managers.

- Write the Core Chat Loop: Program a continuous `while` loop that listens for user input, processes it, and returns the AI’s response.

- Define the System Prompt: Give your assistant a brain by setting its technical boundaries, persona, and specific instructions.

1. Preparing the Environment and API

As a best practice, you should always keep your project dependencies completely isolated. Open up your terminal, create a fresh directory dedicated to your AI build, and initialize a virtual environment. Once that is active, you need to ensure your credentials are safe. Proper integration starts with securely storing your sensitive API keys inside a local .env file rather than hardcoding them.

mkdir custom_ai

cd custom_ai

python -m venv venv

source venv/bin/activate

pip install openai python-dotenv2. Writing the Core AI Logic

Now it is time to write the Python script that will act as your assistant’s brain. Rather than firing off single, disconnected prompts, you will use a while loop. This allows the script to maintain an ongoing, continuous conversation, making it feel much more like a real assistant.

import os

from openai import OpenAI

from dotenv import load_dotenv

# Load API key securely

load_dotenv()

client = OpenAI(api_key=os.getenv("OPENAI_API_KEY"))

# Define system behavior

messages = [{"role": "system", "content": "You are an expert DevOps assistant."}]

print("AI Assistant started. Type 'exit' to quit.")

while True:

user_input = input("

You: ")

if user_input.lower() == 'exit':

break

messages.append({"role": "user", "content": user_input})

response = client.chat.completions.create(

model="gpt-4",

messages=messages

)

ai_reply = response.choices[0].message.content

print(f"

AI: {ai_reply}")

# Store AI response to maintain context memory

messages.append({"role": "assistant", "content": ai_reply})This simple yet powerful script gives you a context-aware chatbot capable of retaining memory throughout your active session. From this starting point, tweaking the system prompt allows you to effortlessly mold the assistant to perfectly fit your unique development workflow.

Advanced Solutions for Python AI Assistants

Once you have the foundation running smoothly, you will eventually hit the natural boundaries of standard API calls—usually involving long-term memory constraints or the need for local file access. This is exactly where advanced developer tools step in to elevate your project.

Using LangChain for Memory and Data Processing

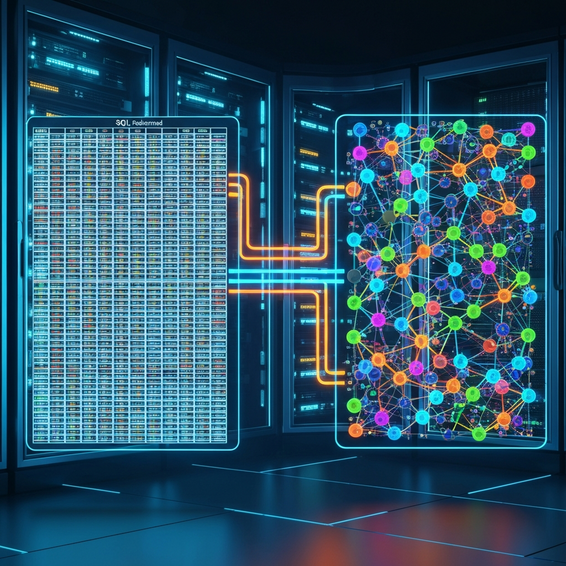

If you want your AI to actively read your internal documentation, scan your databases, or parse local files, you absolutely need to dive into a LangChain tutorial. LangChain is a remarkably robust framework engineered specifically to bridge the gap between large language models and external data sources. Instead of trying to manually paste massive walls of text into your prompt window, LangChain vectorizes your documents to enable Retrieval-Augmented Generation (RAG).

By leveraging LangChain, your AI can dynamically sift through hundreds of PDF manuals, complex DevOps strategies, or dense internal wikis, pinpointing and extracting only the specific paragraphs required to answer your prompt. The system achieves this by connecting an embeddings model to a vector database. When you ask a question, the assistant instantly retrieves the most mathematically relevant data chunks and seamlessly injects them into the context window. Not only does this drastically reduce your token costs, but it also effectively neutralizes hallucinations, guaranteeing that your assistant delivers factually grounded answers.

Implementing a Local LLM Setup

For developers handling highly classified source code or under strict compliance regarding client data, sending any queries out to public APIs is an immediate dealbreaker. In these scenarios, the ultimate advanced solution is setting up a localized LLM. By utilizing exceptional local tools like Ollama or LM Studio, you can run remarkably capable open-source models—such as Llama 3 or Mistral—directly on your own hardware.

Because these local models interact perfectly with Python through libraries like the Ollama API, you can build, train, and query your custom agent without your machine ever needing an internet connection. This completely eradicates any and all data privacy concerns.

Best Practices for Your AI Assistant

To ensure that the custom AI tool you build remains secure, fast, and cost-effective over time, you should adhere to these critical development best practices:

- Strict AI Data Privacy: Under no circumstances should you ever hardcode your API keys directly into your Python scripts. Rely entirely on environment variables (using a

.envfile) and double-check that it is added to your.gitignorebefore you push any code to GitHub or GitLab. - Implement Streaming Output: Generating large text responses can take an AI several seconds, which feels like an eternity to a user. By implementing streaming directly into your API call, the text will appear on the screen word-by-word, which creates a vastly superior and more responsive user experience.

- Manage Token Limits: If you leave a script running for hours, the stored memory array will inevitably max out the model’s context window. You can prevent crashes by coding a rolling memory function that silently drops the oldest messages, or by using an agentic loop that occasionally summarizes past conversations to save space.

- Add Function Calling: Take full advantage of OpenAI’s “tools” parameter. This feature empowers your AI to actively execute predetermined Python functions, letting it do things like monitor server uptime, automatically generate Jira tickets, or fetch AI tutorials directly from your database.

Recommended Tools and Resources

Engineering generative AI applications becomes infinitely easier when you tap into the broader ecosystem of specialized tools. Here is a quick look at what you should consider adding to your development stack:

- OpenAI / Anthropic APIs: The reigning industry heavyweights when it comes to complex reasoning, coding, and logical tasks.

- LangChain & LlamaIndex: The absolute gold standard frameworks for designing context-aware agents capable of reading and processing your local files.

- Ollama: Arguably the most seamless tool available for downloading and running self-hosted, offline AI models on your personal hardware.

- ChromaDB / Pinecone: Highly efficient vector databases used to store the document embeddings necessary for your RAG systems.

- Gradio / Streamlit: Incredible Python libraries that allow you to instantly transform a basic terminal script into a sleek, interactive web interface.

FAQ Section

Is it hard to build an AI assistant in Python?

Not at all; it is actually very accessible. Even if you are a developer with relatively basic Python experience, you can easily code a functional chat assistant in fewer than 50 lines of code just by utilizing the official API wrappers supplied by the major AI companies.

How much does it cost to build a custom AI?

If you choose to run open-source local models on your own machine, the development process is completely free. If you prefer to connect to premium APIs like GPT-4, your costs are calculated strictly per token (the volume of text sent and received). For a solo developer using the tool daily, this generally breaks down to just a few pennies a day.

Can I run my Python AI assistant entirely offline?

Absolutely. By downloading powerful open-source models via platforms like Ollama or HuggingFace, you can configure your entire Python application to run offline. This is the ideal setup for private home networks or corporate environments where maximum security is a non-negotiable requirement.

Conclusion

Struggling with the limitations of generic web interfaces for your complex development workflows is no longer a requirement. Public models simply lack the deep context, system integration, and rigorous security measures needed for serious IT tasks. Carving out the time to build your own ai assistant using python puts you in the driver’s seat, allowing you to create a deeply personalized tool that functions exactly the way you need it to.

You can begin simply by deploying a basic API loop to lock down your credentials and establish a chat connection. From there, you can scale the project up by integrating LangChain for smart document retrieval, or by transitioning to local models for unparalleled data privacy. By blending traditional programming concepts with these cutting-edge AI tools, you will completely transform your productivity and craft an indispensable asset for your everyday workflow.