Building Advanced n8n Automation Projects: A Technical Guide

Today’s IT environments demand far more than basic point-to-point connections. As your business scales, relying on standard cloud automation platforms often leads to frustrating rate limits, sky-high costs, and strict execution boundaries. That is exactly why diving into advanced n8n automation projects has become a true game-changer for developers, IT administrators, and DevOps engineers alike.

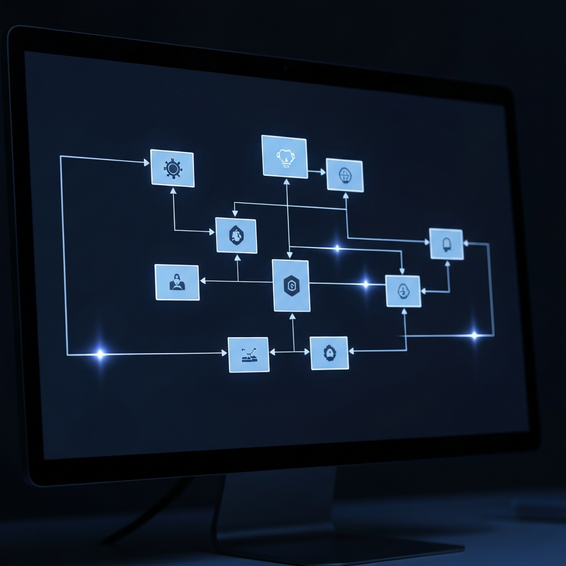

Unlike commercial SaaS alternatives, n8n is an open-source workflow orchestration powerhouse. It gives you the freedom to build deep API integrations, write custom JavaScript or Python logic, and flexibly self-host the entire platform. However, orchestrating highly complex, multi-step workflows requires serious system architecture. Without a solid foundation, you will inevitably run into memory crashes, duplicate webhook executions, and tangled spaghetti logic.

In this technical guide, we will break down the most common bottlenecks that plague workflow execution, share actionable solutions, and walk through step-by-step blueprints for deploying highly scalable automated systems.

Why Bottlenecks Happen in Advanced n8n Automation Projects

When you make the leap from triggering simple webhooks to managing enterprise-grade data pipelines, the technical demands change drastically. Many engineers run into frustrating workflow failures the moment they deploy high-throughput systems. If you want to maintain stability, understanding the root causes of these bottlenecks is absolutely critical.

1. Memory Management and Payload Size

By default, n8n executes its workflows in memory. If an API happens to return a massive JSON payload—such as pulling 100,000 records straight from a PostgreSQL database—the Node.js process hosting your n8n instance will eat up all available RAM and crash. The reality is that standard cloud execution models simply aren’t built to handle heavy ETL (Extract, Transform, Load) tasks unless you introduce proper data batching.

2. Synchronous Processing Failures

If your workflow processes data synchronously and a third-party API suddenly times out, your entire execution will fail. In advanced setups, relying on a single, continuous thread creates a massive single point of failure that can easily break mission-critical infrastructure automation tools.

3. State and Database Locking

When a flood of webhooks hits your server at the exact same time, race conditions are bound to occur. Imagine two separate workflow executions trying to update the exact same ERP system record at the very same millisecond. In those scenarios, database deadlocks or total data corruption aren’t just possible—they become highly likely.

Quick Fixes: Stabilizing Your n8n Foundation

Before you even think about building sophisticated multi-node systems, you need to establish a remarkably stable environment. Here are a few foundational fixes you should apply to your self-hosted n8n instance right away to guarantee maximum uptime.

- Implement the Split In Batches Node: You should never try to process massive arrays all at once. Instead, use the Split In Batches node to handle data in smaller chunks of 50 to 100 items. This simple trick frees up RAM between loops.

- Enable Execution Pruning: Keep your database from bloating by configuring your environmental variables. Simply add

EXECUTIONS_DATA_PRUNE=trueandEXECUTIONS_DATA_MAX_AGE=168(which equals 7 days) right into your Docker Compose configuration. - Switch to PostgreSQL Database: If you are still relying on SQLite (the default setup), it’s time to migrate over to PostgreSQL. SQLite actually locks the database during write operations, which completely destroys concurrency when you have parallel webhook triggers firing.

- Use the Error Trigger Node: Set up a dedicated workflow that kicks off with an Error Trigger node. Whenever any of your main workflows encounter a failure, this node catches the error and instantly fires off an alert to your designated Slack or Discord channel.

3 Advanced n8n Automation Projects

Once your foundational setup is rock-solid, you can finally start tapping into n8n’s true potential. Below are three highly technical, developer-focused automation architectures that are powerful enough to replace entire custom-built microservices.

Project 1: AI-Powered DevOps Triaging Pipeline

Managing DevOps workflow automation usually means someone has to manually read through hundreds of dense error logs. Instead, you can build an automated triage system using the combined power of n8n, OpenAI, and GitHub.

- Trigger: A webhook successfully catches a crashed deployment alert sent over from your CI/CD pipeline (such as GitHub Actions or GitLab CI).

- Processing: An HTTP node steps in to pull the latest application error logs directly from your server or AWS CloudWatch.

- AI Integration: The workflow securely passes those logs to the OpenAI Advanced Data Analysis API, asking it to summarize the crash and recommend a specific code fix.

- Output: Finally, n8n automatically generates a Jira ticket containing the AI-generated solution and tags the assigned on-call engineer in Slack so they can take immediate action.

Project 2: Stateful Multi-Step E-Commerce Sync (ERP)

Standard workflows are inherently stateless, meaning if a workflow pauses, the context is completely lost. By looping in Redis, you can construct stateful workflows perfect for complex processes like order fulfillment.

- Trigger: A platform like WooCommerce or Shopify fires off a “New Order” webhook.

- Execution: n8n catches the data and writes the order ID alongside a “Pending” status directly into a Redis cache.

- Sub-Workflow: A separate n8n node reaches out to ping the warehouse inventory API. If the item happens to be out of stock, it updates the Redis state to “Backordered” and delays any further processing.

- Completion: Once the product finally ships, a final webhook hits n8n. The system pulls the original state back from Redis, updates the central ERP system, and neatly clears out the cache memory.

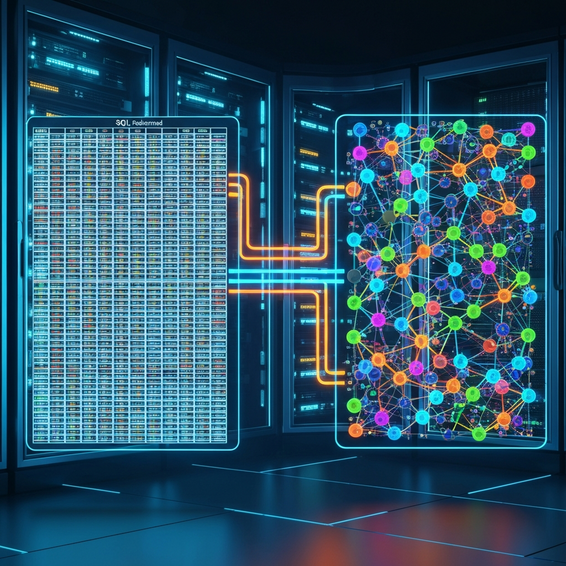

Project 3: Database ETL Pipeline with Webhook Queuing

If your system receives thousands of webhooks every minute from various IoT devices, a standard workflow will inevitably buckle and crash. To survive that kind of volume, you need a robust queue-based ingestion model.

- Ingestion: Spin up a lightweight n8n instance strictly dedicated to catching incoming webhooks. This instance’s only job is to immediately push the raw payload into a RabbitMQ or Redis queue.

- Processing Worker: A secondary, heavy-duty n8n instance continuously subscribes to that queue. It pulls messages down one by one, transforms the complex JSON payloads, and bulk-inserts them safely into a TimescaleDB or PostgreSQL database.

Best Practices for Performance and Security

Deploying advanced n8n automation projects in a live production environment requires strict adherence to modern security and optimization standards. In short, you need to treat your workflows exactly like you would treat standard application code.

Keep Workflows DRY (Don’t Repeat Yourself)

Rather than copying and pasting the exact same authentication or API logic across ten different workflows, take advantage of the Execute Workflow Node. You can build a single “master” workflow that handles an API request, and then have all your other workflows call it as a sub-routine. This keeps your entire system modular and makes long-term maintenance significantly easier.

Secure Your Endpoints

You should never leave your production webhooks wide open to the public without proper validation in place. Always utilize Header Authentication inside the Webhook node. Set up a secure secret key that any external service must pass through the Authorization header. On top of that, make sure your self-hosted n8n instance sits safely behind a reverse proxy like Traefik or Nginx, complete with strict SSL/TLS enforcement.

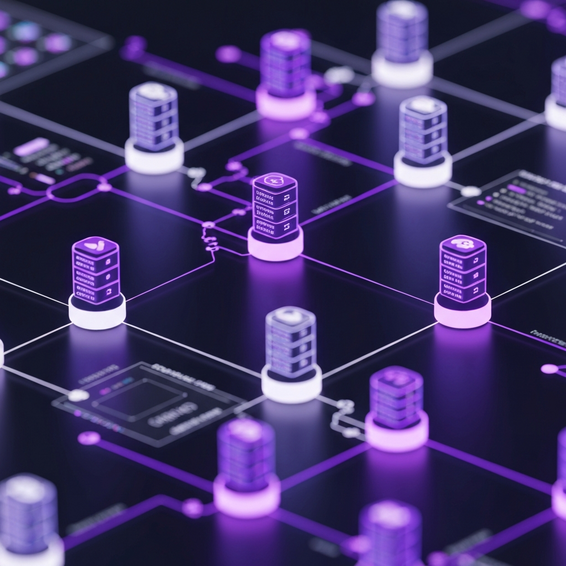

Isolate Through Workers

For setups that experience heavy demand, you should transition n8n from its “regular” mode over to “queue” mode. Doing this allows you to run one primary instance that handles the UI and webhook ingestion, while multiple worker instances execute the heavy background logic. This enables you to scale horizontally as your data needs inevitably grow.

Recommended Tools / Resources

To successfully bring these advanced architectures to life, you are going to need incredibly reliable infrastructure. Here are the top developer-approved tools you should consider to support your self-hosted ecosystem:

- Hetzner Cloud / DigitalOcean: These are highly cost-effective cloud VPS providers that are absolutely perfect for running Docker-based n8n instances, offering dedicated CPUs and generous amounts of RAM.

- Docker & Portainer: Both are completely essential for seamlessly deploying and managing your various n8n containers, PostgreSQL databases, and Redis caches.

- PostgreSQL: Without a doubt, this is the absolute best database backend for n8n. It ensures your workflow executions never hit a bottleneck during moments of high concurrency.

- Redis: A crucial piece of the puzzle for maintaining state across decentralized, multi-step workflow processes.

Frequently Asked Questions (FAQ)

What makes n8n better than Zapier for developers?

Because n8n is both open-source and self-hostable, it eliminates the frustrating rate limits and expensive per-task pricing models typical of SaaS platforms. Natively supporting complex multi-branching, custom JavaScript or Python execution, and advanced error handling, it functions as a true developer tool rather than just a basic consumer application.

How much RAM does self-hosted n8n need?

If you are just running a basic setup, 1GB of RAM will usually get the job done. However, for advanced n8n automation projects that process large datasets or execute multiple concurrent workflows, scaling up to a minimum of 4GB to 8GB of RAM is highly recommended. This will help you avoid those dreaded Node.js out-of-memory errors.

Can I write custom code inside n8n workflows?

Absolutely. The platform features a dedicated Code Node that lets you write plain JavaScript or Python. This gives you the power to manipulate intricate JSON arrays, run complex mathematical formulas, and perfectly transform your data before sending it along to the next step.

How do I handle workflow errors automatically?

You can easily build global error handlers by utilizing the native “Error Trigger” node. If a specific node happens to fail, your system can automatically catch the resulting JSON error output and instantly fire off an alert to your engineering team via email, SMS, or Slack.

Conclusion

Effectively scaling your internal operations means you have to graduate from simple task runners and move toward robust, developer-centric architectures. By leaning into advanced n8n automation projects, your team can finally bypass SaaS restrictions, slash monthly operating costs, and engineer custom data pipelines that map perfectly to your unique business logic.

The best way to begin is by stabilizing your infrastructure with PostgreSQL, implementing smart data batching, and making modular workflow design a daily habit. Once your system is fully secure and highly available, you can confidently roll out AI-powered triage systems, demanding database ETLs, and stateful multi-step processes to unlock unparalleled engineering productivity.