How to Build AI Automation With n8n Step by Step Guide

Let’s face it: in today’s fast-paced IT and DevOps environments, wrestling with manual data pipelines and repetitive administrative tasks is a massive bottleneck. Engineering teams and system admins are always on the hunt for ways to smooth out their operations without getting trapped in expensive, proprietary SaaS ecosystems. If you are currently struggling to scale your operational workflows, the best way forward is to learn how to build AI automation with n8n step by step.

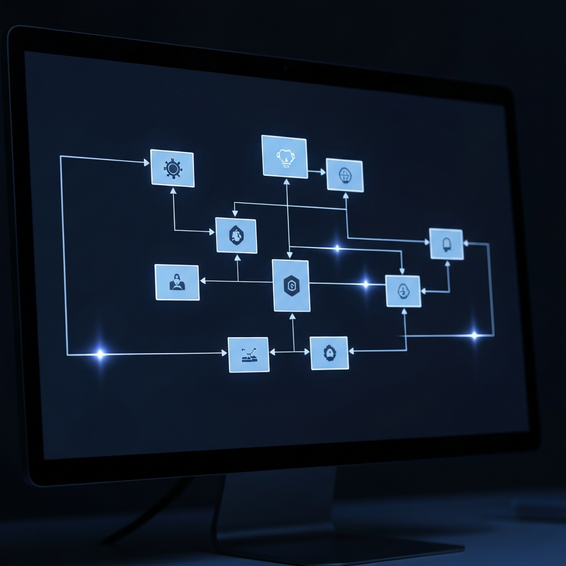

At its core, n8n is an incredibly powerful, node-based workflow automation tool that lets you seamlessly connect hundreds of different apps. When you introduce artificial intelligence into this mix, you transform your systems from basic data-pushers into smart, independent decision-makers. AI-driven workflows have the unique ability to read, interpret, and take action on unstructured data entirely on their own. Whether your goal is to summarize late-night server alerts, draft thoughtful responses to helpdesk tickets, or intelligently sort incoming webhook payloads, n8n is the perfect engine to get the job done.

Throughout this comprehensive, developer-friendly guide, we will dive into the fundamental concepts behind open-source AI automation. We’ll walk you through how to securely hook up OpenAI, and we’ll even share actionable, technical tips to ensure your self-hosted automation infrastructure runs flawlessly from day one.

How to Build AI Automation With n8n Step by Step (Quick Summary)

If you genuinely want to reclaim your time, setting up an intelligent, automated pipeline is an absolute must. To successfully build AI automation with n8n step by step, you’ll generally follow this core sequence:

- Install the Platform: Deploy n8n locally using Docker, or take the easier route with the managed n8n Cloud.

- Initialize a Trigger: Set up an n8n webhook to actively listen for incoming, real-time data payloads.

- Authenticate AI Capabilities: Drop in the OpenAI node and securely enter your API credentials.

- Engineer Your Prompt: Give the LLM clear instructions to parse, summarize, or classify whatever unstructured text comes its way.

- Implement Routing: Add a Switch node to dynamically direct the workflow path based on the AI’s exact output.

- Test and Deploy: Run the workflow manually to double-check the data flow before pushing it live to production.

Why Traditional Automation Fails with Complex Data

Before we jump into the technical setup, it helps to understand why standard automation tools often drop the ball. Traditional workflow engines run strictly on deterministic, rule-based logic. To work properly, they demand perfectly formatted JSON or spotless CSV files. But as we all know, the real world is rarely that neat. Customer emails, internal team chats, and random user feedback are usually messy and highly unstructured.

The moment traditional automation systems bump into this kind of unpredictable text, they tend to break down. They can’t read between the lines to tell if a user is angry, they struggle to pluck a dynamic invoice number out of a rambling paragraph, and they certainly can’t translate a dense technical log into plain English. Because of this rigid architecture, developers are usually forced to write incredibly complex, fragile regular expressions (Regex) or custom Python scripts just to clean up the data before it’s even usable.

This frustrating bottleneck is exactly why the tech industry is rapidly pivoting toward self-hosted n8n AI solutions. By dropping a Large Language Model (LLM) right into the middle of your data pipeline, you essentially bypass the need for all that brittle string manipulation. The AI natively grasps context. So, instead of crashing when data is formatted slightly off, the AI dynamically standardizes the input on the fly, keeping your automated workflows resilient and remarkably robust.

Basic Solutions: Setting Up Your First n8n Environment

Ready to get your hands dirty? Let’s walk through the foundational steps to spin up your n8n workflow environment. Following these simple, actionable steps will help guarantee a frictionless initial deployment.

- Install n8n Locally via Docker: If you want maximum control, running n8n via Docker is highly recommended. Just make sure Docker Desktop is installed, open up your terminal, and pull the official n8n image. This method keeps your environment tidy and perfectly isolates your self-hosted tools from other system dependencies.

- Access the n8n Visual Canvas: With your Docker container up and running, head over to

localhost:5678in your browser. You’ll immediately see the n8n visual canvas—the intuitive, node-based workspace where you’ll map out all your logic. - Configure a Basic Schedule Trigger: Every workflow needs a starting line. Click “Add First Step” and pick the Schedule trigger, setting it to fire off every 15 minutes. It’s an incredibly easy way to test recurring background processes without having to rely on external data inputs right away.

- Add a Simple Output Node: Next, link up a generic node just to see the data flow in action. You might try the Hacker News node or a basic RSS Feed node to pull in a quick list of top stories.

- Execute and Verify the Workflow: Hit the “Execute Workflow” button at the bottom of your screen. If everything is wired up correctly, your Schedule trigger will kick off the next node, and you’ll see the fetched data pop up in a neatly structured JSON format. Just like that, you’ve built your baseline data pipeline!

Advanced Solutions: Integrating OpenAI and Custom APIs

Now that you have a solid foundation, it’s time to roll up your sleeves and truly build AI automation with n8n step by step. By bringing advanced cognitive nodes into the mix, we can transform that basic linear pipeline into a highly intelligent, self-managing system.

1. Establish an n8n Webhook Setup

First things first: swap out your initial Schedule trigger for a Webhook node. Webhooks are fantastic because they allow external apps to push data into n8n the exact moment something happens, rather than forcing n8n to constantly ask if there’s new data. Set this node to listen for POST requests. It’s the ideal setup for catching real-time payloads from your CRM, email client, or bespoke internal tools.

2. Integrate OpenAI with n8n

Next, let’s give your workflow a processing brain. Click the plus icon on your canvas, search for the OpenAI node, and drop it in. You’ll need to link this up securely, so head over to your OpenAI developer dashboard, generate a fresh secret key, and paste it right into n8n’s built-in credential manager. Once you’re authenticated, pick the “Chat” operation and select a fast, cost-effective model like gpt-4o-mini.

3. Master Prompt Engineering in n8n

Now, draw a connection from your Webhook node directly to the OpenAI node. Inside the AI’s prompt text field, you can use n8n’s awesome drag-and-drop variables to inject whatever dynamic data just arrived via the webhook. Be clear and specific with your instructions. Try something like: “Analyze the following incoming customer message. Determine the user sentiment (Positive, Negative, or Neutral) and extract the main request into a short, concise summary.”

4. Implement Advanced Routing

After the AI processes the text and spits out its analysis, use a Switch node to send the workflow down different paths dynamically. For instance, if the AI flags a message with a “Negative” sentiment, you can route that data directly to a Slack node that pings your customer success team for immediate damage control. On the flip side, if the sentiment is “Positive,” you might route it to an email node that automatically fires off a friendly thank-you note.

5. Leverage the n8n HTTP Request Node

Sometimes you need to connect to a legacy internal tool or a niche third-party service that doesn’t have a shiny, pre-built node. That’s where the incredibly versatile n8n HTTP Request node comes to the rescue. This node gives you the absolute freedom to craft custom GET, POST, PUT, or DELETE requests, meaning your self-hosted n8n ecosystem is virtually limitless.

Best Practices for Production Environments

Stepping up to a production-grade environment means you need to start taking security, performance, and overall reliability very seriously. Here are a few golden rules to keep in mind:

- Optimize API Costs and Rate Limiting: Letting an AI run wild can rack up a hefty bill if you aren’t paying attention. Make a habit of using smaller, snappier models like GPT-4o-mini for basic sorting and classification, reserving the heavy-hitting GPT-4 strictly for complex reasoning tasks. Also, don’t forget to use n8n’s Wait node to space out your API calls if you start bumping into rate limits.

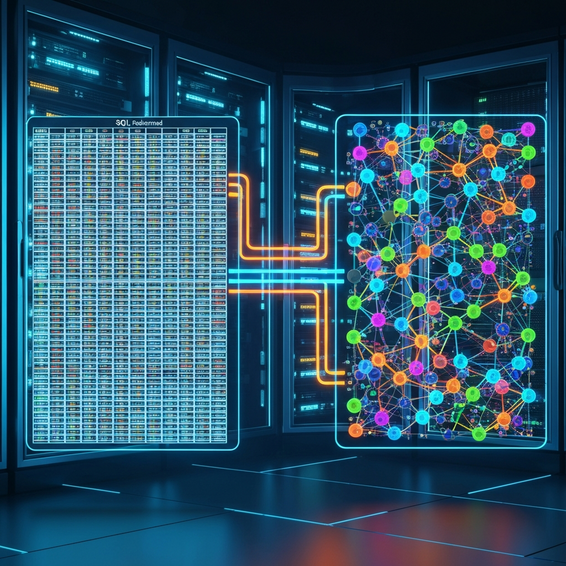

- Implement Fallback Error Handling: Let’s be real: external APIs go down, and AI models occasionally time out. Use n8n’s Error Trigger node to build a dedicated safety net. If a main execution fails, this fallback workflow can step in to automatically log the error in your SQL database or shoot a webhook alert over to your DevOps paging system.

- Secure Your Credentials Properly: It’s tempting to take shortcuts, but never hardcode sensitive API keys, database passwords, or auth tokens directly into your prompt fields or generic nodes. Always stick to n8n’s built-in credential vault, which safely encrypts your secrets at rest. That way, if you ever export your workflow as a JSON file to share with colleagues, your private keys won’t go along for the ride.

Recommended Tools and Resources

Want to squeeze every ounce of potential out of your setup? Here are a few top-tier platforms and tools that pair beautifully with n8n. Remember, building on the right infrastructure is just as crucial as designing the workflows themselves.

- n8n Cloud: The official, fully managed version. It’s an absolute lifesaver if you want to skip the headache of server maintenance and jump straight into designing workflows.

- DigitalOcean Droplets: For the DIY crowd, DigitalOcean provides highly affordable Linux VPS instances that run Docker like a dream, making them perfect for your self-hosted node environments.

- OpenAI Platform: Your go-to hub for generating the API keys you’ll need to fuel those advanced text generation and data parsing nodes.

- Postman: A vital developer tool for testing out your new webhook endpoints. It lets you confirm your JSON payloads are formatted correctly before you start firing them into your live n8n instance.

Frequently Asked Questions (FAQ)

Is n8n completely free to use?

n8n operates on a fair-code license model, which means it’s entirely free to download, self-host, and use for your internal business processes. That said, if you’re looking for fully managed cloud hosting or enterprise-tier features—like granular user permissions and Single Sign-On (SSO)—they do offer premium paid tiers.

Do I need to know how to code to use n8n?

Not necessarily! You don’t strictly need to be a programmer to use n8n, thanks to its highly intuitive, visual drag-and-drop interface. However, having a baseline understanding of JSON structures, how APIs work, and a little bit of JavaScript will massively level up your ability to craft complex, custom data transformations.

How does n8n compare to Zapier for AI automation?

When it comes to deep technical flexibility, n8n generally runs circles around Zapier. It can handle complex JSON arrays natively and lets you build infinite branching logic without punishing you with high per-task fees. Plus, because you have the option to self-host, scaling up an n8n instance ends up being dramatically cheaper than Zapier’s rigid, volume-based pricing.

Can I integrate open-source AI models instead of OpenAI?

You absolutely can. By utilizing the n8n HTTP Request node or exploring community-built nodes, you can easily wire your workflow into local models hosted on platforms like Ollama, Hugging Face, or LM Studio. This is a brilliant way to guarantee zero data leakage and keep all your processing strictly private.

Conclusion

The days of doing tedious, manual data processing by hand are officially behind us. By tapping into intelligent nodes and setting up automated routing logic, developers and IT teams can finally ditch those repetitive admin tasks and channel their energy into high-impact engineering. Mastering how to build AI automation with n8n step by step isn’t just a neat trick—it’s a transformative technical skill that will instantly multiply your productivity and operational leverage.

My advice? Start small. Get your Docker environment running, configure a simple webhook, and experiment with your first OpenAI prompt. As you spend more time in the n8n visual canvas and get comfortable with how it flows, you can start weaving in complex routing, custom HTTP requests, and bulletproof error handling. It’s time to embrace open-source AI automation, win back your valuable time, and start building digital infrastructure that genuinely thinks for itself.