How to Build Scalable Web Apps in the Cloud: Complete Guide

A sudden flood of website traffic is exactly what every business hopes for—until it brings your servers crashing down. Without a solid architecture in place, an unexpected surge in users can quickly turn your biggest success into a technical nightmare.

The fallout is rarely pretty: extended downtime, angry customers, and a noticeable hit to your revenue. Today’s users simply won’t wait around for a sluggish page to load. Plus, search engines like Google actively penalize sites that suffer from slow speeds or frequent outages.

Thankfully, modern cloud computing gives us the flexibility to tackle this exact problem head-on. Just remember, migrating your code to AWS or Azure doesn’t magically make your application bulletproof.

So, if you’re trying to figure out how to build scalable web apps in the cloud, you’ve definitely landed in the right spot. We’re going to walk through exactly why these systems fail under pressure, share some quick fixes you can apply right away, and dive into the advanced DevOps strategies you’ll need to future-proof your tech stack.

Why Scalability Bottlenecks Happen

More often than not, scalability roadblocks trace back to a rigid architecture or deep-seated structural flaws. Older, traditional hosting models run on fixed hardware setups, leaving them without the flexibility to stretch when demand suddenly spikes.

Picture what happens during an unexpected rush of traffic: your server’s CPU spikes to 100%, or its memory completely drains. Before long, incoming HTTP requests start piling up, timing out, or just dropping into the void entirely.

From a technical standpoint, this usually boils down to a handful of common design mistakes. For instance, monolithic setups process every single action through one giant codebase, which makes scaling individual features incredibly frustrating. On top of that, “stateful” applications trap session data locally right on the server’s RAM.

Why is that a problem? Well, if a server hoards those local session states, trying to add more servers behind a load balancer becomes a massive headache. If a user happens to get routed to a different server, they’ll instantly lose their session. Wrapping your head around these core bottlenecks is essentially step one in learning how to build scalable web apps in the cloud.

We also have to talk about unoptimized database queries—another huge offender. You could have web servers capable of juggling thousands of requests simultaneously, but a poorly indexed database will still lock up tables and drag your entire application’s response time to a crawl.

Quick Fixes / Basic Solutions

If you’re currently watching your application buckle under heavy traffic and desperately need to keep things running, there are a few immediate lifelines you can throw it.

- Scale Vertically (Scale Up): The quickest way to handle an unexpected traffic wave is to simply throw more power at it. By bumping up the CPU cores and RAM of your existing cloud instance, you can temporarily sidestep the need for deep architectural rewrites, though you will have to endure a quick server restart.

- Implement a Content Delivery Network (CDN): Hooking up a modern CDN like Fastly or Cloudflare works wonders. By caching your static assets—think images, CSS, and JavaScript—at global edge locations, a CDN drastically cuts down both the bandwidth and the heavy lifting required from your origin server.

- Enable Object Caching: Try integrating an in-memory datastore such as Memcached or Redis. When you cache the database queries that happen most frequently, you stop your primary database from turning into an instant bottleneck.

- Optimize Images and Static Assets: Take the time to minify your code and compress those massive media files. Trimming down your payloads translates to faster data transfers and reduces the number of lingering open connections dragging down your server’s performance.

- Utilize Browser Caching: By tweaking your HTTP headers, you can actually tell a user’s browser to hold onto non-dynamic elements. This simple step prevents your returning visitors from downloading the exact same files over and over again.

Advanced Solutions for Cloud Scalability

Band-aid fixes are great in a pinch, but future-proofing your application requires a structural rethink. Quick patches only delay the inevitable. If you want to see how senior IT teams and DevOps engineers actually approach high availability and sustained performance, here is the playbook.

1. Containerization and Microservices

We’re quickly moving past the days of dumping a massive monolithic application onto a single virtual machine. Today’s cloud-native applications approach things differently, slicing those bulky codebases into smaller, independent microservices that don’t heavily rely on one another.

Tools like Docker let you neatly bundle these services right alongside their necessary dependencies. Once everything is containerized, you can bring in Kubernetes to orchestrate the whole setup. Kubernetes acts like an automated manager—constantly checking container health, rebooting pods that fail, and dynamically scaling specific services up or down based on actual real-time traffic.

2. Horizontal Scaling with Auto-Scaling Groups

Upgrading a single giant server only works until you hit its physical hardware limits. Horizontal scaling offers a better route by spreading your incoming traffic out across several smaller, identical servers. You can easily configure Auto-Scaling Groups through platforms like AWS (via EC2 Auto Scaling) or GCP (using Managed Instance Groups).

The beauty here lies in the automated policies you set. Let’s say you decide that whenever your average CPU usage hits 70%, the system should step in. The cloud provider will automatically spin up fresh instances, while a load balancer kicks in instantly to divide user requests evenly across your newly expanded server fleet.

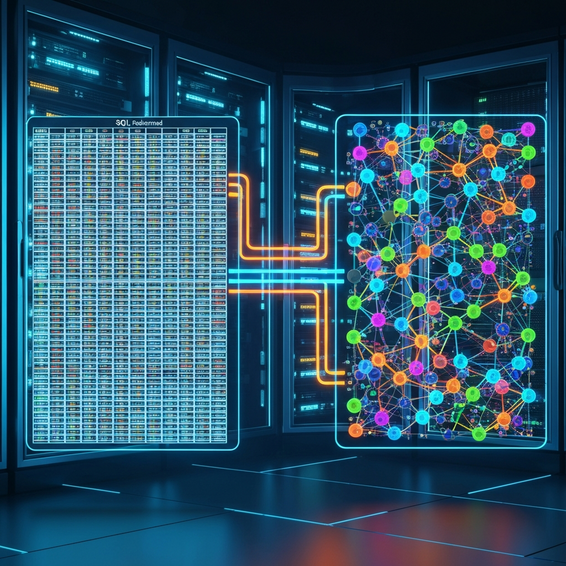

3. Database Read Replicas and Sharding

Ask any developer: databases are incredibly stubborn when it comes to horizontal scaling. A great starting point is bringing in database read replicas. Because the vast majority of web applications are “read-heavy,” these replicas take over the SELECT queries, freeing up your primary database to handle only the critical write operations.

When you’re dealing with massive, enterprise-level growth, you’ll want to look into database sharding. This strategy involves slicing your largest database tables across entirely separate database clusters, usually organized by a specific “shard key” like a user ID. By doing this, you guarantee that a single database never gets crushed under the weight of an enormous dataset.

4. Embracing Serverless Architecture

Not everyone wants to juggle instance management and containers. If that sounds like overkill for your needs, serverless computing might be the answer. Platforms such as Azure Functions or AWS Lambda let you execute code in direct response to system events, totally removing the need to provision servers yourself.

From there, the cloud provider takes the wheel on scaling. It doesn’t matter if your app gets a single click today or ten thousand requests a second tomorrow—serverless platforms adapt instantly. Best of all, your bill only reflects the exact compute time your application actually used.

Best Practices for Cloud Optimization

Sticking to proven cloud-native best practices is the best way to make sure your app stays fast, secure, and budget-friendly as your audience expands.

- Design Stateless Applications: Make it a rule to never store uploaded files or active user sessions on your local application server. Instead, push those sessions into a distributed cache (like Redis) and funnel user uploads into object storage (like Amazon S3). This simple shift ensures that absolutely any server in your fleet can seamlessly handle any incoming request.

- Adopt Infrastructure as Code (IaC): Start managing your cloud resources with tools like Ansible or HashiCorp Terraform. Embracing IaC guarantees that your staging and production environments are perfectly identical, entirely reproducible, and kept strictly version-controlled within Git.

- Implement Robust Observability: The old saying holds true: you can’t scale what you can’t measure. Get observability tools like Datadog, Grafana, or Prometheus up and running. They allow you to hunt down memory leaks, keep an eye on CPU spikes, and pinpoint sluggish database queries long before your users ever notice a hiccup.

- Automate CI/CD Pipelines: For safe and seamless updates, rely on deployment pipelines through GitLab CI, Jenkins, or GitHub Actions. By weaving in automated testing alongside smart deployment methods like Canary or Blue/Green releases, you drastically lower the risk of pushing broken code that could take down your production servers.

- Prioritize Cloud Security: Always isolate your databases inside private subnets within a Virtual Private Cloud (VPC). On top of that, set up Web Application Firewalls (WAF) to filter out DDoS attacks, which have a nasty habit of disguising themselves as normal, organic traffic spikes.

Recommended Tools and Resources

Building and maintaining an architecture that truly scales requires having the right tools in your belt. If you’re looking for industry-standard recommendations for cloud deployments, here’s a solid list to get you started:

- Top Cloud Providers: When it comes to highly reliable auto-scaling features, Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure remain the top contenders in the market.

- Container Orchestration: Use Docker when you need to containerize your applications, and rely on Kubernetes (K8s) to manage all of those containers effectively at scale.

- Infrastructure Provisioning: For scripting your infrastructure both securely and consistently, HashiCorp Terraform is an incredible asset.

- Managed Database Solutions: Platforms like Google Cloud SQL, Amazon RDS, and MongoDB Atlas take the stress out of data management by offering automated backups and high availability right out of the box.

- Performance Monitoring: To get a clear, deep-dive view into your application’s overall health and real-world scaling limits, tools like New Relic and Datadog are practically indispensable.

Frequently Asked Questions (FAQ)

What does it mean to build scalable web apps in the cloud?

At its core, it means engineering an application architecture capable of automatically assigning and releasing computing resources—like RAM, CPU, and network bandwidth. A truly scalable app can gracefully handle wildly fluctuating traffic levels without requiring someone to manually intervene, and without any noticeable drop in performance.

Horizontal vs. vertical scaling: Which is better?

Vertical scaling is all about pumping more power (like extra RAM or CPU) into a single machine. While it’s definitely the simpler route, you’ll eventually hit a hardware ceiling, and you’re left dealing with a single point of failure. Horizontal scaling, on the other hand, means adding more machines into a shared pool. It provides nearly infinite scalability and vastly superior redundancy, which is why it’s the gold standard for robust cloud applications.

Do I need microservices to be highly scalable?

Not always! A thoughtfully optimized, modular monolith can actually handle massive waves of traffic just fine—provided it’s backed by a reliable load balancer, smart caching, a global CDN, and database read replicas. That said, microservices do offer unmatched flexibility if you’re coordinating with a massive, enterprise-level engineering team.

How do load balancers work in cloud environments?

Think of a load balancer as an intelligent traffic cop stationed right in front of your servers. It takes in incoming requests from clients and strategically routes them to healthy servers using specific algorithms, such as “Least Connections” or “Round Robin.” If one server happens to go offline, the load balancer instantly notices and stops sending traffic its way.

What is serverless computing, and is it truly scalable?

Serverless computing is an execution model where your cloud provider takes full responsibility for dynamically allocating machine resources. And yes, it is phenomenally scalable. Since your code functions only fire when actively triggered, the system can seamlessly ramp up from absolutely zero to thousands of concurrent executions—all without a single manual server tweak.

Conclusion

Figuring out exactly how to build scalable web apps in the cloud is an ongoing journey, but it’s an absolutely essential one. By stepping away from rigid, legacy hosting setups and fully leaning into modern, cloud-native strategies, you essentially guarantee a highly available, smooth experience for your users.

Don’t be afraid to start with the quick wins. Enable a global CDN, tidy up your database indexes, and get some solid object caching in place. Then, as your user base steadily grows, you can start transitioning your architecture toward more complex setups like containerization, auto-scaling groups, and database read replicas.

By adopting these robust DevOps practices and CI/CD workflows today, you set yourself up for long-term success. Planning for scalability from the very beginning ensures your web applications will easily shrug off tomorrow’s unexpected traffic spikes, keeping your business online, competitive, and ready for whatever comes next.