How to Integrate AI Into Your Web Apps (Step-by-Step Guide)

Web development is going through a massive evolution. As the demand for smarter, highly responsive software hits an all-time high, developers are left trying to figure out exactly how to integrate AI into your web apps—ideally without tearing down their current tech stack. Whether your goal is to spin up a helpful customer service chatbot, generate dynamic content on the fly, or build out predictive analytics, artificial intelligence isn’t just a shiny add-on anymore. Users now expect it as a baseline standard.

That said, dropping machine learning models right into a live production environment can feel pretty intimidating. It’s easy to get bogged down by high latency, tangled API routing, and cloud bills that seem to have a mind of their own. If you want to bridge the gap between a standard web framework and a resource-heavy machine learning model, you need a very specific architectural game plan.

This guide is designed to walk you through that exact process. We’ll look at the common technical hurdles that trip up new AI features, share some practical quick fixes, and eventually explore advanced infrastructure setups for enterprise-level applications.

By the time you finish reading, you’ll have a clear grasp of both managed API routes and complex, self-hosted architectures. Let’s jump right into the core strategies you need to make AI integration a success.

Why AI Integration Often Fails (The Core Challenges)

Before we jump into solutions, it helps to understand why developers usually hit a wall when learning how to integrate AI into web apps. Think about it: traditional web servers are built purely for speed. They take a request, ping a database, and spit back a tiny JSON payload in a matter of milliseconds. AI, on the other hand, requires massive computational power and operates on a totally different timeframe.

A huge culprit behind failed integrations is poor state management—often leading to frustrating timeout errors when working with Large Language Models (LLMs). Unlike your typical REST API, an AI endpoint might need several seconds, or even minutes, to chew through a prompt. If you try to run that through a standard, synchronous HTTP request-response cycle, it’s going to time out almost every time. You simply have to handle these connections asynchronously.

Then there’s the memory issue. Out of the box, AI models are inherently stateless—they just don’t remember what you told them a moment ago. If you don’t build a robust context management system, you end up with chatbots that suffer from digital amnesia, forgetting context from just two minutes prior. To fix this, you have to continually feed the chat history back to the model, a process that can quickly inflate your token usage.

Finally, scaling your cloud infrastructure improperly is a classic trap. Imagine your new natural language processing feature suddenly goes viral. Without warning, those third-party API costs can skyrocket overnight. If you haven’t set up rate limiting, caching, and infrastructure automation, your app is a sitting duck for both severe performance bottlenecks and massive budget overruns.

Quick Fixes: Basic Solutions for AI Integration

If your goal is to get a smart, reliable, and secure feature up and running today, leaning on established third-party APIs is easily your best starting point. By outsourcing the heavy computational lifting to dedicated providers, your own web servers stay fast and lightweight. Here are a few straightforward ways to pull this off.

- Use Managed LLM APIs: The absolute quickest path to market is hooking your backend directly to something like the OpenAI API, Anthropic, or Google Gemini. These platforms offer straightforward REST endpoints and official SDKs for popular languages like Node.js, Python, and PHP. In just a handful of lines of code, you can start generating text or analyzing user data.

- Leverage Vercel AI SDK: If your stack revolves around modern frameworks like Next.js or React, the Vercel AI SDK is a total game-changer. It effortlessly takes care of the complex data streaming needed to create seamless “typing” effects, handling UI state updates automatically so you don’t have to.

- Implement Pre-built AI Widgets: Sometimes, building a UI from scratch just isn’t worth the time. Platforms like Dialogflow or various specialized SaaS tools provide drop-in JavaScript components for chat interfaces. This drastically reduces custom frontend work, letting you deploy a polished, bug-free user experience in minutes.

- Use Webhooks for Asynchronous Tasks: When you’re dealing with heavy jobs like image generation or massive data analysis, never leave the HTTP request hanging open. Instead, fire the prompt off to the API, return a “processing” status to your frontend, and use a webhook to ping your server the moment the AI finishes the job.

Starting with these managed solutions is absolutely ideal for building out a Minimum Viable Product (MVP). They give you the breathing room to test your AI strategy in the wild, completely bypassing the weeks it would otherwise take to configure and debug expensive cloud infrastructure.

Advanced Solutions for Custom AI Apps

Of course, as your user base scales, leaning entirely on third-party APIs can start to drain your budget—or create headaches around data privacy compliance. As a result, many advanced developers eventually make the leap to self-hosted models or deeply customized enterprise cloud environments.

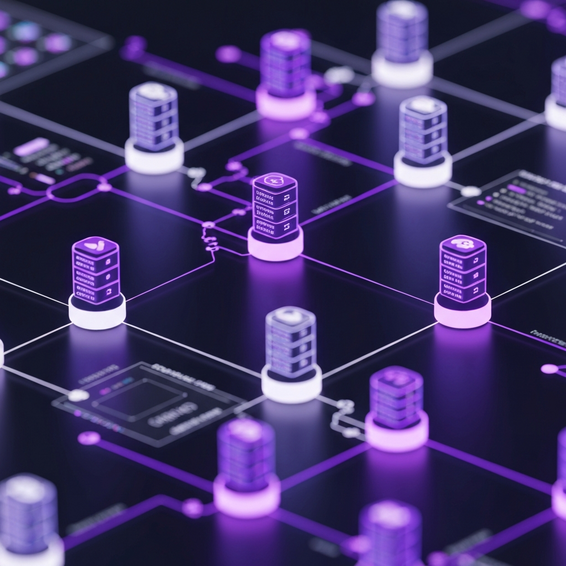

If you look at it from a DevOps perspective, the next logical move is deploying open-source models via Docker and Kubernetes. Ecosystem tools like Ollama, vLLM, or Hugging Face Text Generation Inference make it entirely possible to run robust models—like Llama 3—right on your own GPU-backed instances. Not only does this slash latency for highly specialized tasks, but it also locks down your data privacy entirely.

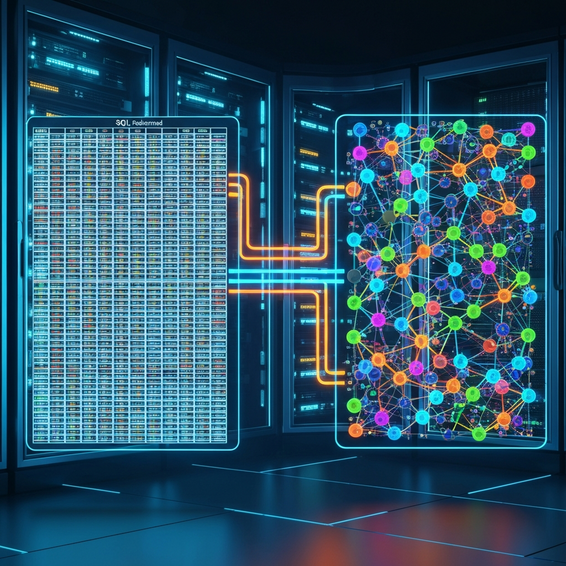

Another highly effective, modern technique to consider is RAG (Retrieval-Augmented Generation). Rather than sinking time and money into the incredibly tedious process of fine-tuning a massive model, you just hook your LLM up to a vector database. From there, you generate “embeddings” of your company’s private documents and neatly store them in that database.

Here’s how it works in practice: when a user asks a question, your backend rapidly searches the vector database for the most relevant context, pulls the text, and seamlessly injects it into the prompt. This enables the AI to safely access your private company data in real-time, effectively stopping hallucinations in their tracks and keeping responses highly accurate.

Best Practices for Performance and Security

Figuring out how to integrate AI into your web apps on a conceptual level is really only half the battle. The real test is keeping things secure and performing well in a production environment—that’s what separates a weekend side project from rock-solid, enterprise-grade software. Keep these crucial optimization tips in mind as you build.

- Implement Semantic Caching: Don’t pay for the same answer twice. Utilize tools like Redis or specialized AI caching layers to save responses to frequently asked queries. If two users ask slight variations of the exact same question, your system should just hand over the cached response. It saves a fortune on API costs and cuts load times down to milliseconds.

- Enforce Strict Rate Limiting: Because they are so resource-heavy, AI endpoints are massive targets for API scraping, abuse, and DDoS attacks. Make sure you set up aggressive rate limiting tied to IP addresses or authenticated user accounts. The last thing you want is a rogue bot script draining your monthly API credits in an hour.

- Sanitize AI Inputs and Outputs: “Prompt injection” is the SQL injection of the AI era. Bad actors will actively try to manipulate your AI into leaking system prompts or running unauthorized commands. Rule number one: never trust user input. Rigorously sanitize any data before it hits the model, and actively filter the outputs so you aren’t serving up inappropriate content.

- Utilize Background Processing: Since we know AI computations take time, get them out of your main thread. Offload these intensive tasks to background message queues like Celery, BullMQ, or RabbitMQ. Once the AI finishes thinking, you can use WebSockets to ping the frontend with a real-time notification.

Recommended Tools and Resources

If you want to build durable, production-ready AI features, you have to pack your developer stack with the right gear. Here are a few standout platforms we consistently recommend for seamless web integration.

- LangChain: This framework (available for both Python and JavaScript) is simply phenomenal for wiring up language model applications. It takes the headache out of chaining multiple prompts together, parsing messy outputs, and hooking up external data sources.

- Hugging Face: Think of this as the ultimate GitHub for machine learning. It’s an incredible hub for open-source models, and you can leverage their inference endpoints to easily test different models before committing to a hefty local deployment.

- AWS Bedrock & Azure OpenAI: These are fully managed, enterprise-tier services that grant you access to major foundational models via a unified, hyper-secure API. If you operate in an industry with strict compliance rules, these are your best bets.

- Pinecone / pgvector: When you need to build RAG architectures, these vector databases are top-tier. They make it surprisingly simple to store your embeddings and finally give your AI applications a sense of long-term memory.

Frequently Asked Questions (FAQ)

What is the easiest way to add AI to my website?

The hands-down easiest method is plugging into a managed API like OpenAI’s GPT-4. By just firing off a standard HTTP POST request from your backend, you can pull in incredibly smart text responses. Best of all, you get to skip the nightmare of provisioning hardware, tweaking server configs, or training models from scratch.

How much does it cost to run AI in a web app?

Honestly, it varies wildly depending on how you build it. Third-party APIs usually charge by the token (which is essentially a word or fragment of a word). This is remarkably cheap on day one, but the costs scale linearly as your user base grows. On the flip side, self-hosting means paying for expensive GPU servers upfront (like an AWS EC2 P-instance). It’s a bigger monthly pill to swallow initially, but it becomes much more cost-effective when you hit massive scale.

Is Python required for AI web development?

Not at all! While Python is undeniably the king when it comes to training and fine-tuning models, actually integrating those pre-trained models into a web app is language-agnostic. Whether you love Node.js, Go, PHP, or Ruby, you’ll find fantastic HTTP clients and robust official SDKs ready to connect with all the major AI APIs.

Can AI models securely access my private database?

Absolutely. The safest way to handle this is through Retrieval-Augmented Generation (RAG). You take your private database records and convert them into secure vector embeddings. When a user types a query, your backend fishes out only the highly relevant pieces of data and temporarily hands them to the AI to help it craft an answer. This guarantees that your sensitive data is never permanently baked into the model’s core training weights.

Conclusion

At the end of the day, figuring out how to integrate AI into your web apps really shouldn’t feel like an insurmountable mountain. By starting off with managed third-party APIs, keeping a close eye on your asynchronous state, and locking down your security from day one, you can deliver next-generation, intelligent features to your users incredibly fast.

As your app inevitably grows and gets hungrier for computational power, you’ll have the foundation needed to pivot toward advanced setups. You can confidently roll out self-hosted models, containerized microservices, and custom vector databases. Just never lose sight of the fundamentals: enforce your rate limits, embrace semantic caching, and obsessively monitor your dashboards to keep those cloud bills predictable.

The best piece of advice? Start small. Find just one targeted feature—maybe an intelligent search bar, an automated support bot, or a quick summarization tool—and build it well using a reliable service. The future of software is undeniably AI-driven, and getting your integration strategy dialed in today will put you lightyears ahead of the competition tomorrow.