The Ultimate SQL Performance Tuning Guide for Developers

We’ve all been there: staring at a loading spinner, waiting for a sluggish application to finally render. More often than not, the culprit isn’t your shiny new front-end or your application code—it’s actually the database. Slow SQL queries have a nasty habit of quietly eating up server resources, bloating page load times, and ultimately driving your users away. And in today’s landscape of scalable cloud architecture, leaning on a poorly optimized data layer is a fantastic way to accidentally skyrocket your monthly infrastructure bills.

It usually happens gradually. As your database scales from a few thousand rows to millions, queries that used to execute in a handful of milliseconds suddenly drag on for seconds—or worse, minutes. Today’s developers frequently rely on Object-Relational Mappers (ORMs) to speed up development. While these tools are incredibly convenient, they can notoriously generate some truly bizarre and inefficient SQL behind the scenes. Because of this, mastering database optimization has shifted from a “nice-to-have” skill to an absolute necessity for developers, DBAs, and DevOps engineers.

Whether you’re fighting with a bloated legacy system, trying to speed up a sluggish reporting dashboard, or just looking to write cleaner application code, we’ve got you covered. This comprehensive SQL performance tuning guide will walk you through exactly why databases slow down, a few actionable quick fixes you can apply today, and the advanced tuning strategies you’ll need for long-term scalability.

Why Slow SQL Queries Happen

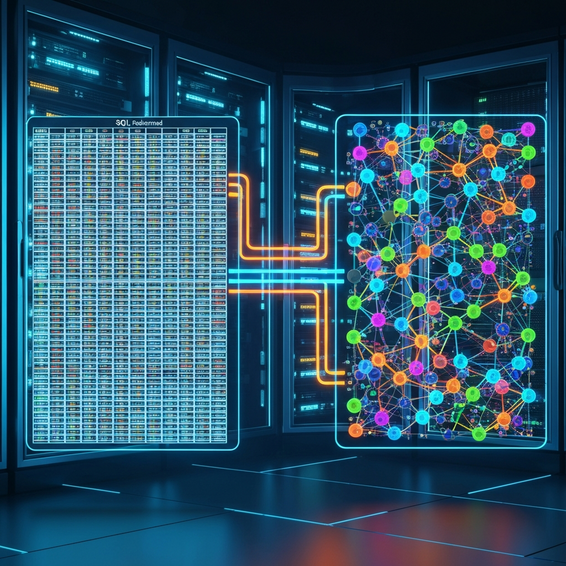

Whenever you strip away the complexity, poor database performance usually boils down to inefficient data retrieval. When your database engine receives a query, its first job is to figure out the fastest route to fetch that data by building an execution plan. But if the engine doesn’t have the right guidance or underlying structures in place, it ends up taking the scenic route.

Missing or improper database indexing easily ranks as the most common reason for a sluggish query. If you don’t provide an index, the database engine has no choice but to scan every single row in your table to find what it’s looking for. We call this a full table scan. Now, if you only have a few hundred records, this happens so fast you won’t even notice. But try doing that on a massive scale, and you’ll quickly drain your system’s CPU cycles, memory, and disk I/O.

Beyond missing indexes, you’ll often run into issues like lock contention and deadlocks. This frustrating scenario happens when multiple transactions try to read and write to the exact same rows at the same time, forcing everything into a waiting queue. Add in some complex Cartesian joins, a few clunky subqueries, or heavy sorting operations happening right in the database layer, and you have a perfect recipe for overwhelming your system’s memory limits.

Quick Fixes for Query Optimization

The good news? You rarely need a massive architectural rewrite to start seeing immediate improvements. By just applying a handful of basic query tuning principles, you can significantly slash both load times and resource consumption. Here are the foundational steps you should be looking at right now.

- Stop Using SELECT *: Asking your database to fetch every single column is incredibly wasteful. It forces the system to read extra data pages from your disk, clogs up memory in the buffer pool, and hogs network bandwidth. Make it a habit to only call the exact columns you actually need.

- Implement Meaningful Indexes: Take a close look at the columns you rely on most heavily in your

WHERE,JOIN,GROUP BY, andORDER BYstatements. By dropping B-Tree indexes on these key columns, you allow the engine to execute lightning-fast binary searches rather than plodding through the entire table. - Write Sargable Queries: SARGable (short for “Search Argument Able”) simply means phrasing your

WHEREclauses so they don’t break your indexes. A classic mistake is wrapping an indexed column in a function. For instance, writingWHERE YEAR(created_at) = 2023completely bypasses the index. Instead, rewrite it as a range to keep things optimized:WHERE created_at >= '2023-01-01' AND created_at < '2024-01-01'. - Filter Data Early: Leverage strict

WHEREclauses to narrow down your dataset right out of the gate. When you push less data down the pipeline into your joins and sorting operations, everything executes remarkably faster. - Use EXISTS Instead of IN: If you’re trying to figure out if certain records exist within a subquery, lean on

EXISTSrather thanIN. The beauty of theEXISTSoperator is that it stops scanning the moment it hits the very first match. TheINoperator, on the other hand, usually forces the engine to process the entire subquery before moving on.

Advanced Solutions for Database Performance

Once the basic fixes are out of the way, it’s time to open up the hood. For developers and operations teams who really want to push their systems, advanced database performance tuning means digging into exactly how your engine translates and runs your SQL commands.

1. Analyze the Execution Plan

If you want a superpower in database optimization, learn how to read an explain plan. By just dropping EXPLAIN or EXPLAIN ANALYZE (depending on the specific SQL dialect you’re using) in front of your query, you can see the literal path the database engine decides to take.

This output is a goldmine. It tells you right away if the engine is smartly using an index scan, lazily falling back to a sequential scan, or getting tangled in a highly expensive nested loop. Once you look at the estimated “cost” of every single operation, you can pinpoint the exact bottleneck and tweak your syntax or indexes to fix it.

2. Optimize Complex JOIN Operations

The way you link your tables together plays a massive role in overall speed. If you try to join two gigantic tables but forget to index the foreign keys, your database will probably default to a Nested Loop join. That means it has to compare every single row in Table A against every single row in Table B. But if you make sure indexes are present on both sides of that join, you give the engine permission to use much faster methods, like Hash Joins or Merge Joins.

3. Resolve Index Fragmentation

Databases are messy, living things. As records are constantly inserted, updated, and deleted over time, the logical order of your carefully planned indexes naturally starts to drift away from the physical data stored on the disk. This creates high index fragmentation, which essentially forces the database’s disk head to jump around frantically, dragging down your read speeds.

To keep things running smoothly, database administrators should configure automated maintenance jobs. A good rule of thumb is to reorganize your indexes once fragmentation hits the 10% to 15% mark, and go for a full rebuild when it creeps past 30%.

4. Table Partitioning

Sometimes your tables are just too big. When you’re dealing with hundreds of millions or billions of rows, even a perfectly optimized index lookup is going to feel sluggish. That’s where table partitioning comes in. It physically chops up a massive table into smaller, easily manageable chunks based on a specific key—like a certain date range or a geographical region.

Through a clever trick known as partition pruning, the database engine learns to only scan the specific partition it needs, completely ignoring the rest of the dataset. This results in fantastic improvements for both read times and write efficiency.

Best Practices for Long-Term Health

Cleaning up old code is a great start, but it’s really only half the battle. If you want to maintain that snappy performance as your application grows, you need to bake some robust engineering habits into your workflow.

- Keep Transactions Short: A transaction that runs too long ends up holding hostage the locks on your database rows and tables. This effectively blocks everyone else from reading or writing. Try to keep your transaction windows as brief as humanly possible so multiple processes can run concurrently.

- Utilize Connection Pooling: Constantly opening and closing database connections over the network is surprisingly heavy on system resources. Instead, set up connection pooling at the application layer—or use a dedicated proxy tool—to keep a warm, stable pool of reusable connections ready to go.

- Choose Appropriate Data Types: Don’t reach for a

BIGINTwhen a standardINTor even aSMALLINTwill do the job perfectly fine. Likewise, try to avoid defaulting toVARCHAR(MAX)for standard text strings. Choosing the smallest possible data type saves disk space and fits much more cleanly into memory, which directly translates to faster cache hits. - Update Statistics Regularly: The query optimizer relies heavily on internal statistics to build its execution plans. If the makeup of your data shifts over time but you leave your statistics outdated, the engine is practically flying blind. It might end up choosing a terrible, incredibly inefficient route.

Recommended Tools for SQL Tuning

Knowing how to manually tune queries is a fantastic skill, but let’s be real—specialized tools make life so much easier. They can automatically watch your workloads, sniff out hidden bottlenecks, and even recommend index upgrades. Here are a few top-tier tools worth checking out:

- SolarWinds Database Performance Analyzer: This is an exceptional platform if you want to dive deep into wait-time analysis. It’s incredibly helpful for spotting the precise queries that are locking up your system.

- pg_stat_statements: If you’re running a PostgreSQL environment, this native extension is an absolute must-have. It meticulously tracks the execution stats of every single SQL statement hitting your server.

- Redgate SQL Toolbelt: Considered an industry standard for SQL Server folks, this suite handles everything from schema deployments and smart code completion to detailed performance monitoring.

Folding the right tools into a modern DevOps workflow just makes sense. It acts as a safety net, ensuring a bad code deployment doesn’t accidentally wreck your SQL environments without you immediately getting an alert.

Frequently Asked Questions

What is SQL performance tuning?

At its core, SQL performance tuning is the systematic process of cleaning up database queries, refining your indexing strategies, and improving overall schema design. The end goal is simple: retrieve and update your data as fast as possible while putting the absolute minimum amount of strain on your CPU, memory, and disks.

How do I identify slow SQL queries?

The easiest way to spot troublemakers is by turning on the native slow query log inside your database (most engines like MySQL or PostgreSQL support this). Alternatively, you can track them using Application Performance Monitoring (APM) tools like Datadog or New Relic. If you’re using SQL Server, querying internal catalog views like sys.dm_exec_query_stats works wonders too.

Does adding more RAM automatically fix slow queries?

Unfortunately, no. Throwing more memory at the problem does allow your database to cache a larger amount of data in RAM, which might act as a temporary band-aid. However, poorly written queries that trigger massive full table scans or loop endlessly will eventually chew through all your available resources, no matter how big the server is. Hardware upgrades are expensive; they definitely shouldn’t replace writing optimized code.

What is an execution plan?

Think of an execution plan as a visual or textual roadmap built by the database optimizer. The engine calculates a bunch of different ways to execute your query and picks the most cost-effective path. Reviewing this plan gives developers a behind-the-scenes look at exactly how their joins and indexes are (or aren’t) being used.

Conclusion

Here’s the reality: database optimization isn’t a “one-and-done” chore you can just check off your to-do list. It’s an ongoing practice that needs to evolve as your application scales and your data continues to grow. By stepping away from sloppy commands, embracing smart indexing, and taking the time to read those execution plans, you’ll drastically cut down server load and squash latency.

My advice? Start small. Use your logs or monitoring tools to find your top five most expensive queries today. Apply the core principles we’ve covered in this SQL performance tuning guide to those specific bottlenecks. You’ll be amazed at how quickly you see faster load times, much happier users, and a vastly more reliable infrastructure.